Lost in knowledge translation? Time to get strategic

8 August 2018

Our recent Forward Thinking posts have discussed funders’ involvement in knowledge translation (KT) research, and KT as a social change project. Now, MSFHR’s President & CEO Dr. Bev Holmes talks KT strategy and evaluation, and challenges funders — and other organizations working to improve evidence use — to take a hard look at their KT activities as a whole and make sure they are focused in the right areas for maximum impact.

Forward Thinking is MSFHR’s blog, focusing on what it takes to be a responsive and responsible research funder.

Lost in knowledge translation? Time to get strategic

Warning! This blog post contains a logic model.

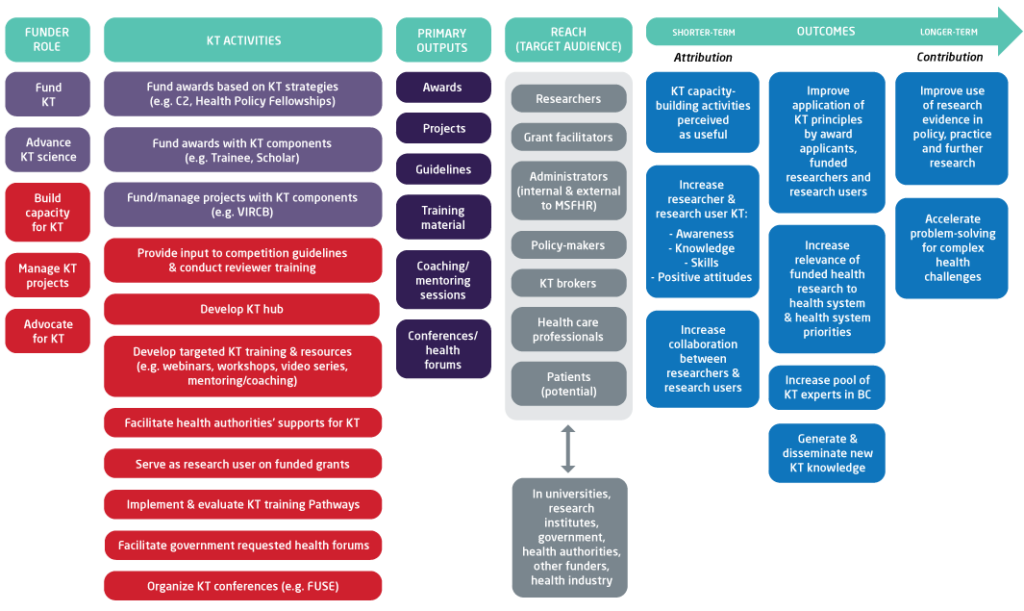

Perhaps an odd way to start a blog post — a warning about logic models — but the much-maligned things provoke a violent reaction in some people. The world seems divided into those who decry their linearity and those who defend their… well… logic. At the Michael Smith Foundation for Health Research, we’ve found them helpful for modelling a theory of change about our KT strategy. A work in progress, it is a highly useful exercise: we’re excited about how it will increase the effectiveness and efficiency of our work in this area, and we think other organizations could benefit from similar, serious consideration about what they do related to KT and why – and whether change is necessary.

The title of the post, as many involved in KT will recognize, plays on an influential, oft-cited article by Ian Graham and colleagues who in 2006 offered a road map for those confused by this fast-growing field. Twelve years later, we think there’s a different kind of “lost” happening. Knowledge translation has exploded and funders, and many other research organizations, are engaged in a dizzying array of related activities, often without a good sense of which provide the best return on investment. In fact, Robert McLean and colleagues (Ian Graham among them) say in a just-published article, it is “paradoxical that funders’ efforts to get evidence into practice are not themselves evidence-based.”

Two commitments and our work to date

MSFHR is committed to evidence-informed practice in our own work, as explained in the kick-off to this blog series. We’re also committed to KT: we believe funders have an important role to play in maximizing the use of research evidence.

Those commitments led us to publish — after a literature review, an environmental scan, and broad circulation of an iterative discussion document, a paper that describes five areas that together create the conditions for effective KT. Funders don’t necessarily have to work in all these areas themselves, but we argue they do need to ensure that someone in the system is either making or helping them happen. The areas are:

- Building capacity for KT (e.g. training and resources)

- Doing KT/managing KT projects (e.g. researcher-decision maker forums, other events)

- Funding KT (e.g. awards)

- Advancing the science of KT (e.g. involvement in research)

- Advocacy for KT (e.g. encouraging and influencing system change)

We have a variety of KT activities underway in each of these areas, and we’re confident we’re on the right track. But how effective are we? And as important, how efficient are we? Here’s where our logic model comes in.

The big question(s)

At the highest level, we want to know how we as a funder can best help increase the use of health research evidence. Development of our five areas took us part of the way, but the word “best” in that sentence demands that we regularly evaluate our KT work at the organizational level.

We want to contribute to improved use of evidence in policy, practice and further research, as well as accelerate problem-solving (logic model, right hand side). And we have a strong sense that our five areas (logic model, left hand side) will get us there. In between, we assume that our activities and outputs under each of these areas will result in shorter and medium term outcomes that we can measure. Given that logic models don’t fully acknowledge the complex systems in which activities and outputs unfold, we are also drawing on our work on KT in complexity as part of our evaluation.

MSFHR’s knowledge translation program logic model (click image to view full screen)

Our evaluation questions are:

- How effective is our KT strategy in achieving short- and medium-term KT outcomes?

- What factors contribute to the success of our KT strategy?

- How could the strategy be improved?

We’re looking at reach (how many people, and which target groups we connected with), usefulness (relevance, quality, satisfaction) and use (what happens afterwards: how our KT work has influenced individual or system change related to evidence use). Ultimately, we need to understand where we can have the most influence, how much should we do in which areas, and for what benefits and impacts.

Where are we now?

We’re currently evaluating our last three years’ work, taking a mixed methods approach, analyzing quantitative data where we have it and filling out the story with qualitative data. Going forward, we’ll be collecting and analyzing data regularly.

For this initial evaluation, key informant interviews, analysis of existing data (for example, feedback from training and webinars) and a short-term activity analysis are complete; a survey is underway. The interview findings suggest MSFHR has helped raise the profile of and legitimize KT in BC, supported the creation of a common language and understanding, and been a catalyst for a valued KT community of practice.

But the most fascinating results will likely be those from an extended activity analysis. For example, are there opportunities to optimize our team’s efforts for broader impact? We know we currently spend a lot of time and effort on capacity building (specifically, workshops) for potentially less overall impact than funding KT, or advocating for change at a system level, can generate. From a business perspective, specifically for capacity building, could our time be better invested in facilitating provincial, large scale training efforts? From an efficiency perspective, should we increase our efforts to support the growing expert KT community in BC through funding and facilitation? Should we step up our work to advocate for change at the system level, for example, dealing with mismatched incentives in the research enterprise?

Where to from here?

Rob McLean et al’s challenge to funding agencies — that their KT efforts should be evidence-based — is one MSFHR has taken on enthusiastically. Our organizational KT evaluation, supported by our logic model and an understanding of complex systems, is already raising important questions like the above, and will ultimately give us the information we need to ensure we’re focusing our efforts in the right areas.

We think many organizations would benefit from an in-depth analysis of their KT activities to help them make sound business decisions, and we’d love to hear from those who are interested. Our evaluation framework is available on our website, and we’ll be sharing the results of the evaluation itself this fall. For more information or to share your thoughts with us, please email our KT Director Gayle Scarrow.